What is Cerebras Systems? The Wafer-Scale AI Chip Challenging NVIDIA’s Inference Dominance

【この記事にはPRを含む場合があります】

While modern AI models continue to grow in intelligence, a major bottleneck has plagued the industry: real-time responsiveness. Running highly advanced AI models requires massive computational resources, a market historically monopolized by NVIDIA’s Graphics Processing Units (GPUs). However, a fundamental shift is occurring. Cerebras Systems, a company armed with massive, purpose-built AI chips, is shattering AI inference speed records and reshaping the semiconductor landscape following its historic May 2026 IPO.

Key Takeaways

- Unmatched Inference Speed: Cerebras’ Wafer-Scale Engine 3 (WSE-3) is a dinner-plate-sized chip boasting 4 trillion transistors, delivering 15x to 20x faster AI inference speeds than NVIDIA’s top-tier GPU clusters.

- OpenAI’s $20 Billion Bet: Seeking independence from NVIDIA, OpenAI signed a massive $20 billion capacity deal with Cerebras to power latency-sensitive models like GPT-5.3 Codex Spark.

- Record-Breaking 2026 IPO: Cerebras (NASDAQ: CBRS) executed the largest tech IPO of 2026, with its stock price surging to $350 on opening day, briefly pushing its valuation past $100 billion.

- The Hardware Divide: The AI hardware market is bifurcating; NVIDIA remains the undisputed king of AI training, while Cerebras is aggressively capturing the real-time AI inference market.

What is Cerebras Systems?

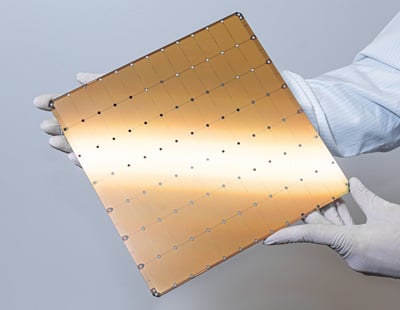

(Source: Cerebras Systems)

Cerebras Systems is an artificial intelligence semiconductor manufacturer headquartered in Sunnyvale, California. Founded in 2015 by a team of five co-founders including CEO Andrew Feldman—a serial entrepreneur who previously sold server company SeaMicro to AMD for $334 million—Cerebras was built on a singular premise: GPUs designed for graphics processing are fundamentally the wrong architecture for AI.

Instead of adapting legacy GPU designs, Cerebras engineered a clean-sheet architecture explicitly optimized for deep learning, creating the infrastructure necessary to power the world’s leading AI research institutions and tech giants.

The Tech Behind the Hype: Wafer-Scale Engine 3 (WSE-3)

The core competitive advantage of Cerebras is its “Wafer-Scale Engine” (WSE) technology. Traditionally, semiconductor manufacturers take a circular silicon wafer and slice it into hundreds of smaller chips. To process massive AI models with trillions of parameters, thousands of these small GPUs must be wired together, creating severe communication bottlenecks (latency) as data travels across network cables.

Cerebras completely bypassed this physical limitation by keeping the entire silicon wafer intact as a single, giant chip. The latest iteration, the WSE-3, is roughly the size of a dinner plate—58 times larger than NVIDIA’s flagship chip.

WSE-3 Hardware Specs:

- 4 Trillion Transistors

- 900,000 AI-optimized Cores

- 44 Gigabytes of on-chip SRAM

- 21 Petabytes/second of memory bandwidth

Because the data never leaves the chip, WSE-3 completely eliminates network latency. For AI “inference” (the process of generating an answer to a user’s prompt), this translates to unprecedented speeds. On large language models like Llama 3.1 70B, Cerebras outputs between 450 to over 2,000 tokens per second—making it up to 20 times faster than the fastest NVIDIA systems.

To truly understand how Cerebras defies traditional semiconductor engineering by solving the fundamental “communication problem” between chips, watch this breakdown of their Wafer-Scale Engine architecture.

Key Takeaways from the Video: The video explains that AI processing is not just a math problem, but a communication problem. Instead of losing speed by moving data across thousands of small GPUs, Cerebras built a single giant wafer with 900,000 cores—58 times larger than Nvidia’s flagship. This effectively eliminates latency bottlenecks, with one piece of silicon replacing up to 60 traditional Nvidia GPUs.

NVIDIA GPUs vs. Cerebras AI Chips: Key Differences

The primary difference between NVIDIA and Cerebras lies in hardware architecture and their specific domains of dominance within the AI lifecycle.

While NVIDIA’s distributed GPU clusters are unmatched for building and “training” massive AI models from scratch, they suffer from data movement latency during “inference”. Cerebras, holding all model data in its massive on-chip SRAM, excels at the real-time inference required for instant AI responses.

| Feature | NVIDIA (e.g., H100 / B200) | Cerebras Systems (WSE-3 / CS-3) |

|---|---|---|

| Architecture | Cluster of thousands of small chips | Single Wafer-Scale Engine |

| Memory Placement | Relies on off-chip HBM (High Bandwidth Memory) | Massive on-chip SRAM |

| Primary Strength | AI Model Training & General Purpose Compute | Ultra-low latency AI Inference |

| Software Ecosystem | Industry-standard “CUDA” platform | Emerging ecosystem compatible with PyTorch |

Data sourced from Cerebras hardware comparisons.

Why OpenAI Exclusively Adopted Cerebras

In January 2026, OpenAI—the creator of ChatGPT—signed a long-term compute provision contract with Cerebras worth up to $20 billion. Notably, OpenAI chose the Cerebras WSE-3 over NVIDIA to exclusively power its real-time coding assistant model, GPT-5.3 Codex Spark.

This move is driven by three strategic pillars:

- The Need for Real-Time Speed: For coding assistants and voice AI agents, even seconds of latency destroy the user experience. Cerebras’ pure speed solves this critical product hurdle.

- The “4-Track” Infrastructure Strategy: OpenAI is actively mitigating its supply-chain risk by diversifying its hardware across NVIDIA, AMD, Cerebras, and its own custom ASICs.

- Deep Financial Integration: OpenAI is not just a customer; they issued a $1 billion zero-interest loan for operating capital to Cerebras and hold warrants to acquire approximately 10% of Cerebras’ equity.

For a deeper dive into the broader market impact of Cerebras’ IPO and why top institutional investors are betting on the shift toward ultra-low-latency infrastructure, this CNBC interview featuring Altimeter Capital’s Brad Gerstner is highly recommended.

Key Takeaways from the Video: Brad Gerstner highlights that the future of AI lies in the “production and consumption of tokens” (inference), a sector where Cerebras excels. He notes that while Nvidia remains dominant, there is room for multiple big winners, and purpose-built inference chips like those from Cerebras are essential to meet the nearly unlimited global demand for low-latency AI compute.

The Largest AI IPO: Cerebras Hits NASDAQ

On May 14, 2026, Cerebras Systems went public on the NASDAQ under the ticker symbol CBRS, marking the largest tech IPO of the year. Originally projected to price between $115 and $125 per share, overwhelming institutional demand—reportedly oversubscribed by 20x—pushed the final IPO price to $185.

Upon market open, the stock skyrocketed to $350 per share, briefly pushing the company’s market capitalization past the $100 billion mark in a historic display of investor enthusiasm for AI infrastructure.

The era of NVIDIA being the sole provider for all AI workloads has ended. As the market matures into a multi-architecture ecosystem, Cerebras is uniquely positioned to dominate the massive, high-margin AI inference sector, forcing businesses and investors to look beyond the model itself and focus on the ultra-low-latency infrastructure powering it.

Frequently Asked Questions (FAQ)

Q: Is Cerebras faster than NVIDIA?

A: Yes, specifically for AI inference. Because Cerebras utilizes a massive, single-wafer chip with entirely on-chip memory (SRAM), it eliminates data transfer bottlenecks, resulting in inference speeds 15x to 20x faster than traditional NVIDIA GPU clusters. However, NVIDIA still maintains dominance in the AI model training phase.

Q: What is the Cerebras ticker symbol and when did it IPO?

A: Cerebras Systems trades on the NASDAQ under the ticker symbol CBRS. The company went public on May 14, 2026, in one of the most highly anticipated technology IPOs in recent history.

Q: Why is the Cerebras chip so large compared to regular computer chips?

A: Cerebras uses a “wafer-scale” architecture. Instead of cutting a 300mm silicon wafer into hundreds of small GPUs that must communicate over slow network cables, Cerebras leaves the wafer intact. This single, dinner-plate-sized chip allows trillions of transistors and AI cores to communicate internally at lightning speeds without external network latency.

Q: Who are Cerebras Systems’ biggest customers?

A: Currently, their most significant customer is OpenAI, which signed a massive $20 billion capacity deal to power real-time AI models. Historically, the company also generated significant revenue from UAE-based entities like G42 and MBZUAI, and works with heavy enterprise clients like GlaxoSmithKline, AstraZeneca, and various US National Laboratories.

- Huxe AI App Shutting Down: A Final Review of the Ultimate Daily Podcast

- RUNNING TRAIN on Steam: The Ultra-Realistic Japanese Train Simulator – PC Specs, Controllers, and AI Driving Explained